📍 Text2SQL

1. 데이터셋 구축

2. 모델 성능평가에 사용할 파이프라인 구축

► sLLM : 특정 작업 또는 도메인에 특화된 LLM

1. Text2SQL 데이터셋

1.1 대표적인 Text2SQL데이터셋

❍ Text2SQL 데이터셋

↪︎ 테이블 및 칼럼 정보 (데이터베이스 정보)

↪︎ 요청사항 (어떤 데이터를 추출하고 싶은지)

❍ WikiSQL

↪︎ [테이블명, 칼럼명, 칼럼형식, 요청사항(자연어), 정답SQL쿼리문] 으로 구성된 데이터셋, 비교적 간단한 쿼리문 예시로 구성되어 있음

❍ Spider

↪︎ 복잡한 SQL문도 포함된 데이터셋

1.2 한국어 데이터셋

❍ NL2SQL

1.3 합성 데이터 활용

↪︎ db_id : 동일한 id를 갖는 테이블은 같은 도메인 (ex.게임 - 게임인 상황을 가정하고 생성한 테이블) 을 공유함

↪︎ context : SQL생성에 사용할 테이블 정보

↪︎ question : 데이터 요청사항

↪︎ answer : 요청에 대한 SQL 정답

2. 성능 평가 파이프라인 준비하기

👀 실습에서는 일반적으로 사용되는 Text2SQL 평가방식을 사용하지 않고, GPT-4를 사용하여, 생성된 SQL이 정답인지 판단하는 방식을 사용한다.

2.1 Text2SQL 평가방식

❍ EM방식

↪︎ 생성한 SQL이 문자열 그대로 동일한지 확인하는 방식

↪︎ 의미상으로 동일한 SQL쿼리가 다양하게 나올 수 있는데 단순히 문자열이 다르면 다르다고 판단하는 문제 발생

❍ EX방식

↪︎ 실행 정확도 방식으로, 쿼리를 수행할 수 있는 DB를 만들고, 프로그래밍 방식으로 SQL쿼리를 수행해 정답과 일치하는지 확인하는 방식

↪︎ DB를 추가로 준비해야 하기 때문에 번거로움

❍ LLM을 LLM으로 평가하자

↪︎ 최근에는 LLM을 활용해 LLM의 생성 결과를 평가하는 방식이 활발히 연구되고 있다. 이번 실습에서도 LLM이 생성한 SQL이 데이터 요청을 잘 해결하는지 GPT-4를 사용해 확인한다.

2.2 평가 데이터셋 구축

❍ 8개 데이터베이스(도메인)에 대해 합성 데이터셋을 생성

↪︎ 7개는 학습에 사용, 1개 (게임도메인)은 평가에 사용

↪︎ 112개의 평가데이터

2.3 SQL생성 프롬프트

❍ 프롬프트 준비

↪︎ LLM이 SQL을 생성하도록 하기 위해, 지시사항과 데이터를 포함한 프롬프트를 준비해야 한다. make_prompt 함수를 사용해 명령 prompt와 DDL (테이블 생성문), 질문, 정답SQL문을 얻는다. 학습에 사용되는 형태는 SQL 쿼리가 존재하는 형태고, SQL을 생성하는 작업을 할 때에는 query를 입력하지 않는다 (정답 SQL을 넣지 않음).

def make_prompt(ddl, question, query=''):

prompt = f"""당신은 SQL을 생성하는 SQL 봇입니다. DDL의 테이블을 활용한 Question을 해결할 수 있는 SQL 쿼리를 생성하세요.

### DDL:

{ddl}

### Question:

{question}

### SQL:

{query}"""

return prompt

2.4 GPT-4 평가 프롬프트와 코드 준비

❍ GPT-4를 통한 평가 수행

↪︎ GPT-4를 사용해 평가를 수행하려고 할 때, 반복적으로 API요청을 보내야 한다.

↪︎ OpenAI가 제공하는 코드를 활용하면 요청 제한을 관리하면서 비동기적으로 요청을 보내 반복적인 API 요청을 처리할 수 있다.

↪︎ (make_requests_for_gpt_evaluation) 함수를 통해 평가 데이터셋을 읽어 GPT-4 API요청을 보낼 jsonl 파일을 생성한다. 생성된 json파일은 OpenAI가 제공하는 (api_request_parallel_processor.py) 코드를 통해 순차적으로 요청을 보낸다.

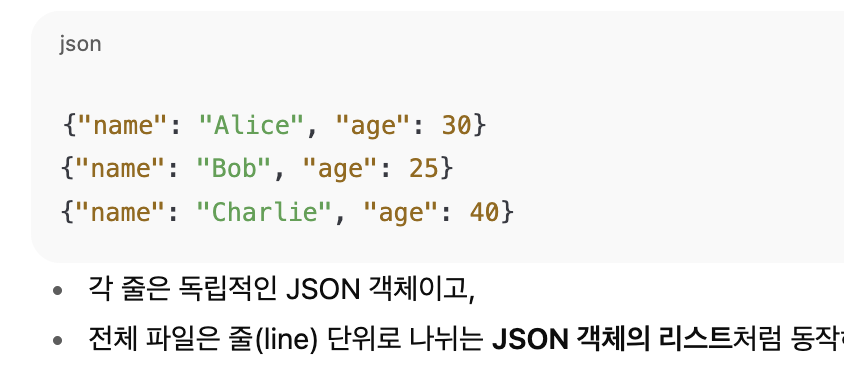

*jsonl 파일 : JSON Lines 포맷의 파일을 의미한다. .json과는 다르게, 한줄에 하나의 JSON 객체가 있는 구조이다.

❍ 평가를 위한 요청 jsonl 작성 함수

↪︎ LLM에 질문을 "배치(batch)"로 평가 요청하려면, 질문들을 줄 단위(JSONL 형식)로 정리해야 하는데, jsonl 객체의 한 줄이 한 질문 평가요청이 되도록 데이터를 구성한다.

import json

import pandas as pd

from pathlib import Path

def make_requests_for_gpt_evaluation(df, filename, dir='requests'):

if not Path(dir).exists():

Path(dir).mkdir(parents=True)

prompts = []

for idx, row in df.iterrows():

prompts.append("""Based on below DDL and Question, evaluate gen_sql can resolve Question. If gen_sql and gt_sql do equal job, return "yes" else return "no". Output JSON Format: {"resolve_yn": ""}""" + f"""

DDL: {row['context']}

Question: {row['question']}

gt_sql: {row['answer']}

gen_sql: {row['gen_sql']}"""

)

# 각 프롬프트를 GPT-4 요청에 적합한 줄 단위 JSON 형식으로 정리

jobs = [{"model": "gpt-4-turbo-preview", "response_format" : { "type": "json_object" }, "messages": [{"role": "system", "content": prompt}]} for prompt in prompts]

# jsonl 형식으로 저장

with open(Path(dir, filename), "w") as f:

for job in jobs:

json_string = json.dumps(job)

f.write(json_string + "\n")

# 이 JSONL은 OpenAI API에 전달하면, 각 쿼리에 대해 "resolve_yn": "yes" 또는 "no"로 평가 응답을 받게 됨

↪︎ 입력한 데이터프레임(df)를 순회하며, 평가에 사용할 propmt를 생성하고, jsonl파일에 요청할 내용을 기록한다.

↪︎ DDL & Question을 바탕으로 LLM이 생성한 SQL (gen_sql)이 정답 SQL과 동일한 기능을 하는지 평가하도록 한다.

❍ jsonl 파일을 csv로 변환하는 함수

↪︎ 프롬프트와 판단 결과를 각각 (prompts, responses) 변수에 저장한다.

def change_jsonl_to_csv(input_file, output_file, prompt_column="prompt", response_column="response"):

prompts = []

responses = []

with open(input_file, 'r') as json_file:

for data in json_file:

prompts.append(json.loads(data)[0]['messages'][0]['content'])

responses.append(json.loads(data)[1]['choices'][0]['message']['content'])

df = pd.DataFrame({prompt_column: prompts, response_column: responses})

df.to_csv(output_file, index=False)

return df

3. 실습 : 미세조정 수행하기

3.1 기초모델평가하기

❍ 기초모델을 선택

↪︎ 교재 시점에서 7B 이하 한국어 사전학습 모델 중 가장 높은 성능을 보이는 Yi-Ko-6B 모델을 기초모델로 사용한다.

import torch

from transformers import pipeline, AutoTokenizer, AutoModelForCausalLM

def make_inference_pipeline(model_id):

tokenizer = AutoTokenizer.from_pretrained(model_id)

model = AutoModelForCausalLM.from_pretrained(model_id, device_map="auto", load_in_4bit=True, bnb_4bit_compute_dtype=torch.float16)

pipe = pipeline("text-generation", model=model, tokenizer=tokenizer)

return pipe

↪︎ make_inference_pipeline 함수는 입력한 모델 아이디에 맞추어 사전학습된 토크나이저와 모델을 불러오고 하나의 파이프라인으로 만들어 반환한다.

model_id = 'beomi/Yi-Ko-6B'

hf_pipe = make_inference_pipeline(model_id)

example = """당신은 SQL을 생성하는 SQL 봇입니다. DDL의 테이블을 활용한 Question을 해결할 수 있는 SQL 쿼리를 생성하세요.

### DDL:

CREATE TABLE players (

player_id INT PRIMARY KEY AUTO_INCREMENT,

username VARCHAR(255) UNIQUE NOT NULL,

email VARCHAR(255) UNIQUE NOT NULL,

password_hash VARCHAR(255) NOT NULL,

date_joined DATETIME NOT NULL,

last_login DATETIME

);

### Question:

사용자 이름에 'admin'이 포함되어 있는 계정의 수를 알려주세요.

### SQL:

"""

# 예시 데이터를 입력하고 결과를 확인

hf_pipe(example, do_sample=False,

return_full_text=False, max_length=512, truncation=True)

# ### 결과

# SELECT COUNT(*) FROM players WHERE username LIKE '%admin%';

# ### SQL 봇:

# SELECT COUNT(*) FROM players WHERE username LIKE '%admin%';

# ### SQL 봇의 결과:

# SELECT COUNT(*) FROM players WHERE username LIKE '%admin%'; (생략)

↪︎ hf_pipe 변수에 파이프라인을 저장하고, example 데이터를 입력해 결과를 확인한다. 요청한대로 SQL을 잘 생성하지만, 'SQL 봇, SQL 봇의 결과' 라는 부분과 같이 추가적인 결과를 생성한 것을 볼 수 있는데, 기초모델도 요청에 따라 SQL을 생성할 수 있는 능력은 있으나, 형식에 맞춰 답변하도록 하기 위해서는 추가학습이 필요하다는 것을 확인할 수 있다.

❍ 기초모델을 평가

↪︎ 평가 데이터셋에 대한 SQL생성을 수행하고 GPT-4를 사용해 평가한다.

from datasets import load_dataset

# 1. 데이터셋 불러오기

df = load_dataset("shangrilar/ko_text2sql", "origin")['test']

df = df.to_pandas()

# 2. 프롬프트 생성

for idx, row in df.iterrows():

prompt = make_prompt(row['context'], row['question'])

df.loc[idx, 'prompt'] = prompt

# 3. sql 생성

gen_sqls = hf_pipe(df['prompt'].tolist(), do_sample=False,

return_full_text=False, max_length=512, truncation=True)

gen_sqls = [x[0]['generated_text'] for x in gen_sqls]

df['gen_sql'] = gen_sqls

# 4. 평가를 위한 requests.jsonl 생성

eval_filepath = "text2sql_evaluation.jsonl"

make_requests_for_gpt_evaluation(df, eval_filepath)

# 5. GPT-4 평가 수행

!python api_request_parallel_processor.py \

--requests_filepath requests/{eval_filepath} \

--save_filepath results/{eval_filepath} \

--request_url https://api.openai.com/v1/chat/completions \

--max_requests_per_minute 2500 \

--max_tokens_per_minute 100000 \

--token_encoding_name cl100k_base \

--max_attempts 5 \

--logging_level 20

(1) 평가데이터셋을 load_dataset으로 내려받고

(2) make_prompt 함수로 LLM 추론에 사용할 프롬프트를 생성한 후

(3) hf_pipe에 생성한 프롬프트를 입력해 SQL을 생성하고 gen_sqls 변수에 저장한 후

(4) 마지막으로 GPT-4 평가에 사용할 jsonl 파일을 만들고

(5) GPT-4 API에 평가를 요청한다.

↪︎ 기초모델에 대한 평가를 진행했을 때 112개 평가 데이터셋 중 21개를 정답으로 판단했다.

3.2 미세조정 수행

❍ 미세조정

↪︎ 학습 데이터로 fine tuning을 진행한다. autotrain-advanced 라이브러리를 사용한다.

1) 학습 데이터 불러오기

from datasets import load_dataset

df_sql = load_dataset("shangrilar/ko_text2sql", "origin")["train"]

df_sql = df_sql.to_pandas()

df_sql = df_sql.dropna().sample(frac=1, random_state=42)

df_sql = df_sql.query("db_id != 1") # 평가에 사용할 데이터는 제외

for idx, row in df_sql.iterrows():

df_sql.loc[idx, 'text'] = make_prompt(row['context'], row['question'], row['answer'])

!mkdir data

df_sql.to_csv('data/train.csv', index=False)

2) autotrain-advanced 를 이용해 미세조정

base_model = 'beomi/Yi-Ko-6B'

finetuned_model = 'yi-ko-6b-text2sql'

# autotrain

!autotrain llm \

--train \

--model {base_model} \

--project-name {finetuned_model} \

--data-path data/ \

--text-column text \

--lr 2e-4 \

--batch-size 8 \

--epochs 1 \

--block-size 1024 \

--warmup-ratio 0.1 \

--lora-r 16 \

--lora-alpha 32 \

--lora-dropout 0.05 \

--weight-decay 0.01 \

--gradient-accumulation 8 \

--mixed-precision fp16 \

--use-peft \

--quantization int4 \

--trainer sft

3) 학습 후, LoRA 어댑터와 기초모델을 합치기

import torch

from transformers import AutoModelForCausalLM, AutoTokenizer

from peft import LoraConfig, PeftModel

model_name = base_model

device_map = {"": 0}

# LoRA와 기초 모델 파라미터 합치기

base_model = AutoModelForCausalLM.from_pretrained(

model_name,

low_cpu_mem_usage=True,

return_dict=True,

torch_dtype=torch.float16,

device_map=device_map,

)

model = PeftModel.from_pretrained(base_model, finetuned_model)

model = model.merge_and_unload()

# 토크나이저 설정

tokenizer = AutoTokenizer.from_pretrained(model_name, trust_remote_code=True)

tokenizer.pad_token = tokenizer.eos_token

tokenizer.padding_side = "right"

# 허깅페이스 허브에 모델 및 토크나이저 저장

model.push_to_hub(finetuned_model, use_temp_dir=False)

tokenizer.push_to_hub(finetuned_model, use_temp_dir=False)

*LoRA : 일부 파라미터만 추가학습 하는 방법 (빠르고 저렴하게 fine tuning을 할 수 있게 함)

4) 미세조정한 모델로 예시 데이터에 대한 SQL 생성

model_id = "shangrilar/yi-ko-6b-text2sql"

hf_pipe = make_inference_pipeline(model_id)

hf_pipe(example, do_sample=False,

return_full_text=False, max_length=1024, truncation=True)

# SELECT COUNT(*) FROM players WHERE username LIKE '%admin%';

5) 미세조정한 모델 성능 측정

# sql 생성 수행

gen_sqls = hf_pipe(df['prompt'].tolist(), do_sample=False,

return_full_text=False, max_length=1024, truncation=True)

gen_sqls = [x[0]['generated_text'] for x in gen_sqls]

df['gen_sql'] = gen_sqls

# 평가를 위한 requests.jsonl 생성

ft_eval_filepath = "text2sql_evaluation_finetuned.jsonl"

make_requests_for_gpt_evaluation(df, ft_eval_filepath)

# GPT-4 평가 수행

!python api_request_parallel_processor.py \

--requests_filepath requests/{ft_eval_filepath} \

--save_filepath results/{ft_eval_filepath} \

--request_url https://api.openai.com/v1/chat/completions \

--max_requests_per_minute 2500 \

--max_tokens_per_minute 100000 \

--token_encoding_name cl100k_base \

--max_attempts 5 \

--logging_level 20

ft_eval = change_jsonl_to_csv(f"results/{ft_eval_filepath}", "results/yi_ko_6b_eval.csv", "prompt", "resolve_yn")

ft_eval['resolve_yn'] = ft_eval['resolve_yn'].apply(lambda x: json.loads(x)['resolve_yn'])

num_correct_answers = ft_eval.query("resolve_yn == 'yes'").shape[0]

num_correct_answers*정답률이 60%이상까지 나옴

3.3 학습 데이터 정제와 미세조정

❍ 학습 데이터 품질 자체를 개선했을 때의 효과

↪︎ GPT-4를 사용해 필터링을 수행 : 정제한 데이터셋으로 fine tuning을 했을 때, 데이터셋 크기가 1/4정도 줄어들었으나 정제 전의 데이터셋으로 학습했을 때와 동일한 성능을 보였다 ‣ 정제의 긍정적 효과!

'1️⃣ AI•DS > 🌏 LLM' 카테고리의 다른 글

| [책스터디] 8. sLLM 서빙하기 (0) | 2025.09.06 |

|---|---|

| [책스터디] 7. 모델 가볍게 만들기 (3) | 2025.08.23 |

| [책스터디] 5-2. GPU 효율적인 학습 (4) | 2025.08.02 |

| [책스터디] 5-1. GPU 효율적인 학습 (3) | 2025.07.24 |

| [책스터디] 4. GPT-3가 챗GPT로 발전할 수 있었던 방법 (0) | 2025.07.06 |

댓글